Rivas, Pablo, and Mehang Rai. 2023. “Enhancing CNNs Performance on Object Recognition Tasks with Gabor Initialization” Electronics 12, no. 19: 4072. https://doi.org/10.3390/electronics12194072

Our latest journal article, authored by Baylor graduate and former Baylor.AI lab member Mehang Rai, MS, marks an advancement in Convolutional Neural Networks (CNNs). The paper, titled “Enhancing CNNs Performance on Object Recognition Tasks with Gabor Initialization,” has not only garnered attention in academic circles but also achieved the prestigious Best Poster Award at the LXAI workshop at ICML 2023, a top-tier conference in the field.

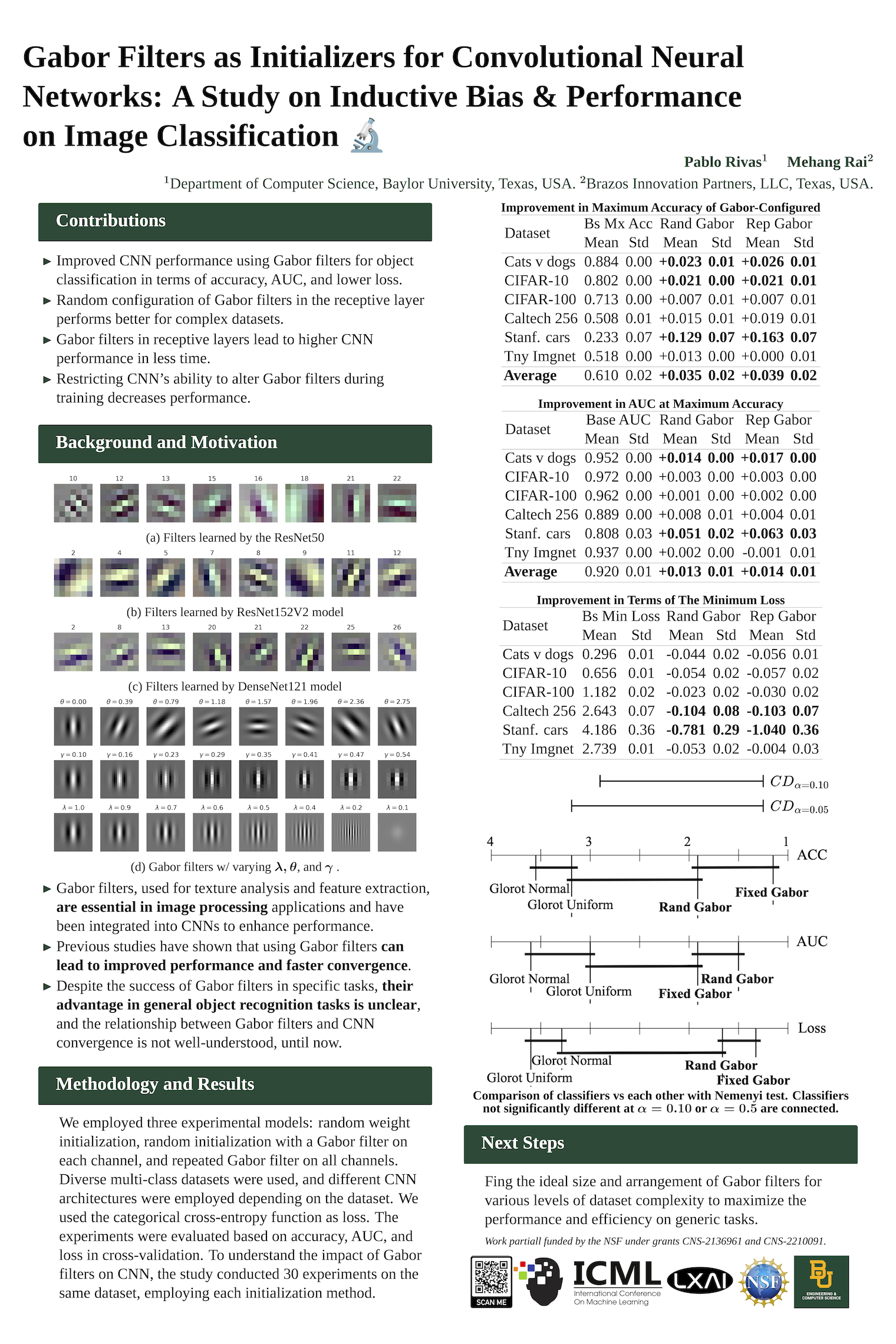

Pablo Rivas and Mehang Rai, ” Gabor Filters as Initializers for Convolutional Neural Networks: A Study on Inductive Bias and Performance on Image Classification “, in The LXAI Workshop @ International Conference on Machine Learning (ICML 2023), 7/2023.

A Journey from Concept to Recognition Our journey with this research began with early discussions and progress shared here. The idea was simple yet profound: exploring the potential of Gabor filters, known for their exceptional feature extraction capabilities, in enhancing the performance of CNNs for object recognition tasks. This exploration led to a comprehensive study comparing the performance of Gabor-initialized CNNs against traditional CNNs with random initialization across six object recognition datasets.

Key Findings and Contributions The results were fascinating to us. The Gabor-initialized CNNs consistently outperformed traditional models in accuracy, area under the curve, minimum loss, and convergence speed. These findings provide robust evidence in favor of using Gabor-based methods for initializing the receptive fields of CNN architectures, a technique that was explored before with little success because researchers had been constraining Gabor filters during training, precluding gradient descent to optimize the filters as needed for general purpose object recognition, until now.

Our research contributes significantly to the field by demonstrating:

- Improved performance in object classification tasks with Gabor-initialized CNNs.

- Superior performance of random configurations of Gabor filters in the receptive layer, especially with complex datasets.

- Enhanced performance of CNNs in a shorter time frame when incorporating Gabor filters.

Implications and Future Directions This study reaffirms the historical success of Gabor filters in image processing and opens new avenues for their application in modern CNN architectures. The impact of this research is vast, suggesting potential enhancements in various applications of CNNs, from medical imaging to autonomous vehicles.

As we celebrate this achievement, we also look forward to further research. Future studies could explore initializing other vision architectures, such as Vision Transformers (ViTs), with Gabor filters.

It’s a proud moment for us at the lab to see our research recognized on a global platform like ICML 2023 and published in a journal. This accomplishment is a testament to our commitment to pushing the boundaries of AI and ML research. We congratulate Mehang Rai for this remarkable achievement and thank the AI community for their continued support and recognition.